Introduction #

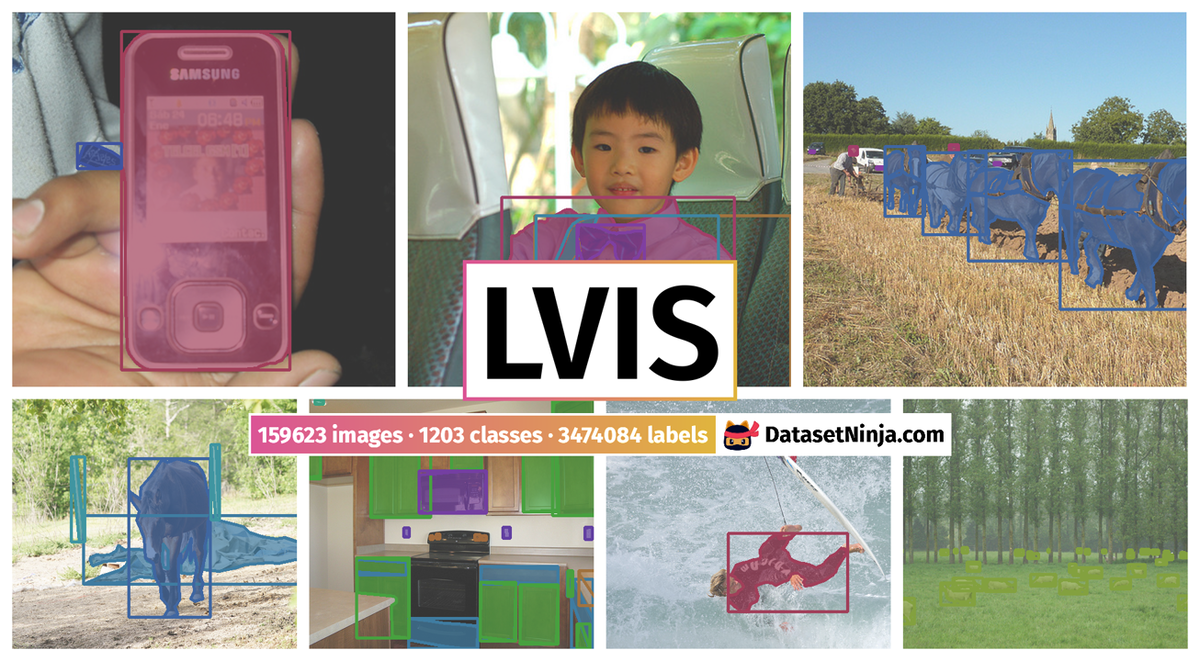

In the realm of object detection, the authors of the LVIS (Large Vocabulary Instance Segmentation) dataset recognized the pivotal role of datasets in directing the research community’s attention to various challenges. This evolution has taken researchers from simple images to complex scenes and from bounding boxes to segmentation masks. The authors’ aim is to amass approximately 2 million high-quality instance segmentation masks, encompassing over 1000 entry-level object categories found in 164,000 images. Given the Zipfian distribution of categories in natural images, LVIS inherently exhibits a long tail of categories with limited training samples. As contemporary deep learning techniques for object detection tend to perform inadequately in scenarios with limited training data, the authors view their dataset as an essential and compelling new avenue for scientific exploration.

One of the primary objectives of computer vision is to imbue algorithms with the capability to intelligently describe images. Object detection serves as a fundamental task in image description, as it aligns with intuitive appeal, practical utility, and ease of benchmarking in existing contexts. Noteworthy enhancements have been made in object detector accuracy, alongside the development of novel capabilities like predicting segmentation masks and 3D representations. This progression opens up exciting possibilities to extend these methods toward new objectives. Presently, the rigorous evaluation of general-purpose object detectors primarily takes place within a limited category regime (e.g., 80 categories) or when a substantial number of training examples are available for each category (e.g., 100 to 1000+). Consequently, there is an opportunity to foster research in a more natural setting where numerous categories coexist, and data per category may be sparse. The presence of a long tail of rare categories is inescapable, and the act of annotating additional images often uncovers previously unseen, rare categories.

Efficiently learning from a minimal number of examples presents a significant open challenge in the realms of machine learning and computer vision. The authors regard this opportunity as one of the most thrilling, both from a scientific and practical perspective. However, to delve into this area empirically, the authors recognized the necessity for an appropriate, high-quality dataset and benchmark.

To facilitate this novel research direction, the authors designed and curated LVIS, a benchmark dataset dedicated to research in Large Vocabulary Instance Segmentation. The authors’ annotation process commences from a collection of images obtained without prior knowledge of the categories to be labeled within them. They engage annotators in an iterative process of object spotting, uncovering the long tail of categories that naturally emerge in the images while avoiding the use of machine learning algorithms for automated data labeling.

The authors made a significant observation that the desired evaluation protocol did not mandate exhaustive annotation of all images with all categories. Instead, the key requirement was the existence of two distinct subsets, the Positive set (Pc) and the Negative set (Nc), for each category. The Positive set consists of images in which all instances of category are segmented exhaustively, while the Negative set contains images where no instance of category is present. These subsets enable standard COCO-style AP evaluation for each category. Crucially, the evaluation oracle assesses the algorithm’s performance for a given category only on the subset of images where category has been exhaustively annotated. If a detector reports a detection of category on an image outside of this subset, the detection remains unevaluated. By consolidating these per-category sets into a single dataset, the authors introduced the concept of a federated dataset. A federated dataset is an amalgamation of smaller constituent datasets, each resembling a traditional object detection dataset for a single category. The freedom to avoid annotating all images with all categories while maintaining ample inter-annotator agreement reduced ambiguity in the annotation process and significantly reduced the workload.

Category relationships from left to right: non-disjoint category pairs may be in partially overlapping, parent-child, or equivalent (synonym) relationships, implying that a single object may have multiple valid labels. The fair evaluation of an object detector must take the issue of multiple valid labels into account.

Furthermore, the authors emphasized that membership in the Positive and Negative sets for the test split remained undisclosed. Algorithms had no prior knowledge of which categories would be evaluated in each image. Consequently, an algorithm was required to make predictions for all categories in each test image.

Annotation Pipeline

-

Object Spotting, Stage 1: In this initial stage, the goals are to generate the Positive set (Pc) for each category in the vocabulary and elicit vocabulary recall by including various object categories in the dataset. Object spotting is an iterative process where each image is visited multiple times. Annotators mark one object with a point and assign it a category from the vocabulary. Subsequent visits involve marking unmarked objects of previously unmarked categories. If an image is skipped three times, it is no longer visited.

-

Exhaustive Instance Marking, Stage 2: The objectives of this stage are to verify annotations from Stage 1 and mark all instances of category c in images belonging to Pc. Each pair (i, c) from Stage 1 is sent to five annotators for verification. Annotators mark all instances of the same category if the label matches. Frequent categories are subsampled to avoid dominance.

-

Instance Segmentation, Stage 3: In this stage, the focus is on verifying category labels from Stage 2 and upgrading point annotations to full segmentation masks. An annotator is tasked with verifying the category label and, if correct, drawing a detailed segmentation mask for the marked object instance.

-

Segment Verification, Stage 4: The primary goal of this stage is to verify the quality of segmentation masks from Stage 3. Annotators rate the quality of each segmentation mask, with a mask accepted only if four annotators agree it is high-quality.

-

Full Recall Verification, Stage 5: This stage completes the Positive sets. Annotators are asked if there are any unsegmented instances of category c in images belonging to Pc. An image is considered exhaustive only if at least four annotators agree; otherwise, the exhaustive annotation flag is marked as false.

-

Negative Sets, Stage 6: In the final stage, the authors collect Negative sets (Nc) for each category c by randomly sampling images from D that do not belong to Pc. Images where any annotator reports the presence of category c are rejected, while the rest are added to Nc.

The output of these stages yields a comprehensive dataset with Positive and Negative sets for each category, along with high-quality segmentation masks.

Vocabulary Construction

The authors constructed the vocabulary (V) using an iterative process. They began with a large super-vocabulary of 8.8k synsets from WordNet, selected based on concrete common nouns. Subsequently, they applied object spotting to 10k COCO images, using the super-vocabulary for autocomplete. This iterative process was repeated, resulting in a final vocabulary containing 1723 synsets.

Summary #

LVIS: A Dataset for Large Vocabulary Instance Segmentation v1.0 is a dataset for instance segmentation, semantic segmentation, and object detection tasks. It is applicable or relevant across various domains.

The dataset consists of 159623 images with 3474084 labeled objects belonging to 1203 different classes including tennis_racket, short_pants, ski_pole, and other: sock, skateboard, bench, umbrella, chair, pillow, person, dog, sunglasses, sofa, belt, jean, drawer, ski, spectacles, backpack, necklace, necktie, horse, trousers, wheel, flag, hat, plate, shoe, and 1175 more.

Images in the LVIS dataset have pixel-level instance segmentation annotations. Due to the nature of the instance segmentation task, it can be automatically transformed into a semantic segmentation (only one mask for every class) or object detection (bounding boxes for every object) tasks. There are 40609 (25% of the total) unlabeled images (i.e. without annotations). There are 4 splits in the dataset: training set (100170 images), test challenge (19822 images), test dev (19822 images), and validation set (19809 images). The dataset was released in 2019 by the Facebook AI Research (FAIR).

Explore #

LVIS dataset has 159623 images. Click on one of the examples below or open "Explore" tool anytime you need to view dataset images with annotations. This tool has extended visualization capabilities like zoom, translation, objects table, custom filters and more. Hover the mouse over the images to hide or show annotations.

Class balance #

There are 1203 annotation classes in the dataset. Find the general statistics and balances for every class in the table below. Click any row to preview images that have labels of the selected class. Sort by column to find the most rare or prevalent classes.

Class ㅤ | Images ㅤ | Objects ㅤ | Count on image average | Area on image average |

|---|---|---|---|---|

tennis_racket➔ any | 2364 | 10006 | 4.23 | 5.33% |

short_pants➔ any | 2348 | 14710 | 6.26 | 3.98% |

sock➔ any | 2334 | 17577 | 7.53 | 1.15% |

ski_pole➔ any | 2334 | 24061 | 10.31 | 5.65% |

skateboard➔ any | 2328 | 9590 | 4.12 | 5.56% |

bench➔ any | 2326 | 16655 | 7.16 | 17.24% |

umbrella➔ any | 2321 | 25843 | 11.13 | 18.75% |

pillow➔ any | 2319 | 16156 | 6.97 | 12.53% |

chair➔ any | 2319 | 36538 | 15.76 | 13.95% |

person➔ any | 2318 | 38506 | 16.61 | 28.82% |

Images #

Explore every single image in the dataset with respect to the number of annotations of each class it has. Click a row to preview selected image. Sort by any column to find anomalies and edge cases. Use horizontal scroll if the table has many columns for a large number of classes in the dataset.

Class sizes #

The table below gives various size properties of objects for every class. Click a row to see the image with annotations of the selected class. Sort columns to find classes with the smallest or largest objects or understand the size differences between classes.

Class | Object count | Avg area | Max area | Min area | Min height | Min height | Max height | Max height | Avg height | Avg height | Min width | Min width | Max width | Max width |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

banana any | 123521 | 0.7% | 94.22% | 0% | 1px | 0.23% | 640px | 100% | 38px | 7.71% | 1px | 0.16% | 640px | 100% |

book any | 82775 | 0.46% | 100% | 0% | 1px | 0.21% | 639px | 100% | 41px | 8.56% | 1px | 0.16% | 640px | 100% |

carrot any | 48034 | 0.68% | 74.31% | 0% | 1px | 0.16% | 587px | 100% | 39px | 8.26% | 2px | 0.31% | 640px | 100% |

pole any | 44246 | 0.48% | 73.44% | 0% | 1px | 0.16% | 640px | 100% | 63px | 13.42% | 1px | 0.16% | 640px | 100% |

apple any | 42951 | 0.81% | 81.25% | 0% | 1px | 0.23% | 480px | 100% | 35px | 7.39% | 1px | 0.2% | 640px | 100% |

person any | 38506 | 2.89% | 100% | 0% | 1px | 0.23% | 640px | 100% | 77px | 16.38% | 1px | 0.16% | 640px | 100% |

chair any | 36538 | 1.37% | 100% | 0% | 1px | 0.16% | 640px | 100% | 50px | 10.53% | 1px | 0.16% | 640px | 100% |

sheep any | 35951 | 1.41% | 86.09% | 0% | 1px | 0.21% | 582px | 100% | 41px | 9.29% | 1px | 0.16% | 640px | 100% |

orange_(fruit) any | 32723 | 0.97% | 99.18% | 0% | 2px | 0.31% | 607px | 100% | 38px | 8.08% | 1px | 0.2% | 612px | 100% |

car_(automobile) any | 32516 | 1.52% | 100% | 0% | 1px | 0.16% | 640px | 100% | 36px | 7.78% | 1px | 0.16% | 640px | 100% |

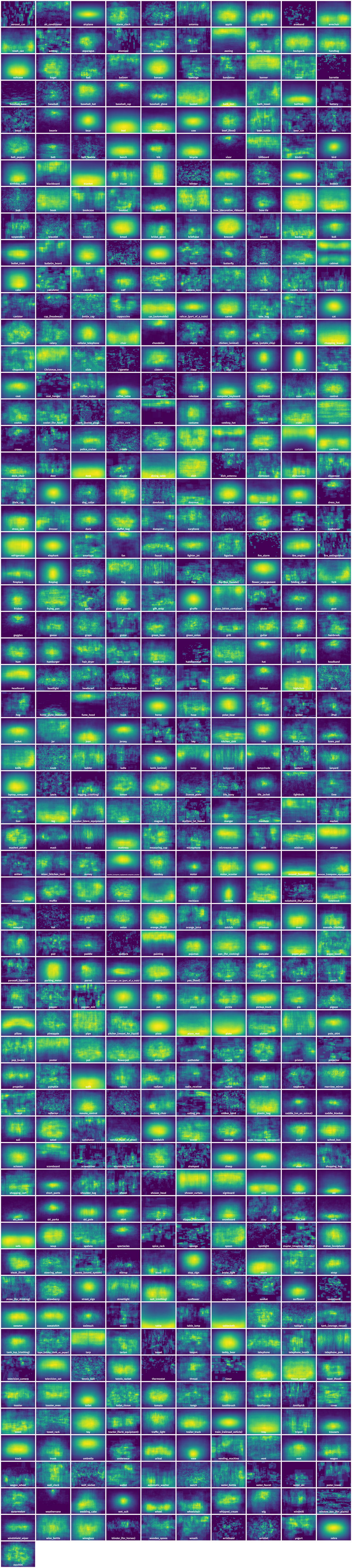

Spatial Heatmap #

The heatmaps below give the spatial distributions of all objects for every class. These visualizations provide insights into the most probable and rare object locations on the image. It helps analyze objects' placements in a dataset.

Objects #

Table contains all 95085 objects. Click a row to preview an image with annotations, and use search or pagination to navigate. Sort columns to find outliers in the dataset.

Object ID ㅤ | Class ㅤ | Image name click row to open | Image size height x width | Height ㅤ | Height ㅤ | Width ㅤ | Width ㅤ | Area ㅤ |

|---|---|---|---|---|---|---|---|---|

1➔ | basket any | 000000517068.jpg | 480 x 640 | 108px | 22.5% | 296px | 46.25% | 4.02% |

2➔ | basket any | 000000517068.jpg | 480 x 640 | 108px | 22.5% | 296px | 46.25% | 10.41% |

3➔ | sausage any | 000000517068.jpg | 480 x 640 | 22px | 4.58% | 59px | 9.22% | 0.22% |

4➔ | sausage any | 000000517068.jpg | 480 x 640 | 22px | 4.58% | 59px | 9.22% | 0.42% |

5➔ | sausage any | 000000517068.jpg | 480 x 640 | 29px | 6.04% | 26px | 4.06% | 0.16% |

6➔ | sausage any | 000000517068.jpg | 480 x 640 | 29px | 6.04% | 26px | 4.06% | 0.25% |

7➔ | sausage any | 000000517068.jpg | 480 x 640 | 28px | 5.83% | 23px | 3.59% | 0.13% |

8➔ | sausage any | 000000517068.jpg | 480 x 640 | 28px | 5.83% | 23px | 3.59% | 0.21% |

9➔ | sausage any | 000000517068.jpg | 480 x 640 | 38px | 7.92% | 27px | 4.22% | 0.19% |

10➔ | sausage any | 000000517068.jpg | 480 x 640 | 38px | 7.92% | 27px | 4.22% | 0.33% |

License #

The LVIS annotations along with this website are licensed under a Creative Commons Attribution 4.0 License. All LVIS dataset images come from the COCO dataset; please see link for their terms of use.

Citation #

If you make use of the LVIS data, please cite the following reference:

@dataset{LVIS,

author={Agrim Gupta and Piotr Dollár and Ross Girshick},

title={LVIS: A Dataset for Large Vocabulary Instance Segmentation v1.0},

year={2019},

url={https://www.lvisdataset.org/dataset}

}

If you are happy with Dataset Ninja and use provided visualizations and tools in your work, please cite us:

@misc{ visualization-tools-for-lvis-dataset,

title = { Visualization Tools for LVIS Dataset },

type = { Computer Vision Tools },

author = { Dataset Ninja },

howpublished = { \url{ https://datasetninja.com/lvis } },

url = { https://datasetninja.com/lvis },

journal = { Dataset Ninja },

publisher = { Dataset Ninja },

year = { 2026 },

month = { jun },

note = { visited on 2026-06-08 },

}Download #

Dataset LVIS can be downloaded in Supervisely format:

As an alternative, it can be downloaded with dataset-tools package:

pip install --upgrade dataset-tools

… using following python code:

import dataset_tools as dtools

dtools.download(dataset='LVIS', dst_dir='~/dataset-ninja/')

Make sure not to overlook the python code example available on the Supervisely Developer Portal. It will give you a clear idea of how to effortlessly work with the downloaded dataset.

The data in original format can be downloaded here:

Disclaimer #

Our gal from the legal dep told us we need to post this:

Dataset Ninja provides visualizations and statistics for some datasets that can be found online and can be downloaded by general audience. Dataset Ninja is not a dataset hosting platform and can only be used for informational purposes. The platform does not claim any rights for the original content, including images, videos, annotations and descriptions. Joint publishing is prohibited.

You take full responsibility when you use datasets presented at Dataset Ninja, as well as other information, including visualizations and statistics we provide. You are in charge of compliance with any dataset license and all other permissions. You are required to navigate datasets homepage and make sure that you can use it. In case of any questions, get in touch with us at hello@datasetninja.com.